How was your COLING experience? Please, let us know through this post-event survey.

Author Archives: Leon Derczynski

Session chairs

Here is a list of sessions and chairs for the main conference:

- Eva Hajicova Session 1-1-a – Co-reference. Tuesday 21st August, 11:00 – 12:20

- Fabiola Henri Session 1-1-b – Low-resource languages. Tuesday 21st August, 11:00 – 12:20

- Junichi Tsujii Session 1-1-c – Parsing. Tuesday 21st August, 11:00 – 12:20

- Hen-Hsen Huang Session 1-2-a – Discourse relations. Tuesday 21st August, 13:50 – 15:50

- Jan Hajic Session 1-2-b – Machine translation. Tuesday 21st August, 13:50 – 15:50

- Chengqing Zong Session 1-2-c – Named entities. Tuesday 21st August, 13:50 – 15:50

- Daisuke Kawahara Session 1-3-a – Aspect-based sentiment, Ethics. Tuesday 21st August, 16:20 – 18:00

- Hayato Kobayashi Session 1-3-b – Relation extraction, Summarization. Tuesday 21st August, 16:20 – 18:00

- Yu-Nung (Vivian) Chen Session 1-3-c – Dialogue systems. Tuesday 21st August, 16:20 – 18:00

- Marcos Zampieri Session 2-1-a – Language change, Historical linguistics. Wednesday 22nd August, 10:30 – 11:50

- Sung-Hyon Myaeng Session 2-1-b – Embedding creation. Wednesday 22nd August, 10:30 – 11:50

- Wei Xu Session 2-1-c – ML methods. Wednesday 22nd August, 10:30 – 11:50

- Donia Scott Session 3-1-a – Generation. Thursday 23rd August, 10:30 – 12:10

- Tim Baldwin Session 3-1-b – Embedding creation. Thursday 23rd August, 10:30 – 12:10

- Chu-Ren Huang Session 3-1-c – Humor, rumor, sarcasm & spam. Thursday 23rd August, 10:30 – 12:10

- Hannah Rohde Session 3-2-a – Sentiment. Thursday 23rd August, 13:40 – 15:20

- Yuji Matsumoto Session 3-2-b – IE. Thursday 23rd August, 13:40 – 15:20

- Chenhui Chu Session 3-2-c – Multimodal processing, ASR, NLI. Thursday 23rd August, 13:40 – 15:20

- Sanja Stajner Session 3-3-a – Applications. Thursday 23rd August, 15:50 – 17:30

- Min-Yen Kan Session 3-3-b – Distributional semantics. Thursday 23rd August, 15:50 – 17:30

- Rada Mihalcea Session 3-3-c – Emotion. Thursday 23rd August, 15:50 – 17:30

- Chaitanya P. Shivade Session 4-1-a – Question answering. Friday 24th August, 10:30 – 12:30

- Roman Klinger Session 4-1-b – Rumor. Friday 24th August, 10:30 – 12:30

- Nicoletta Calzolari Session 4-1-c – Second language, Biomedical. Friday 24th August, 10:30 – 12:30

Presenting your academic work at a conference – applicable tips and advice

by Nanna Inie, researcher and practitioner in Digital Design at Aarhus University.

PRESENTING YOUR ACADEMIC WORK AT A CONFERENCE – applicable tips and advice

Everybody likes a good conference presentation. It is your chance to catch the interest of a room of interested peers that might cite your work and help spread it for you. Whether you are presenting a talk or a poster, you have the opportunity to sprout the interest of those conference attendees that just accidentally happened to be there because you were co-located with a talk or poster they actually came to see.

Perhaps even more importantly, you also take upon yourself the risk of boring the living hell out of those attendees that chose to give you just a little bit of their most valuable resources: their time and attention. That is something to be respectful of.

In this post I would like to share some applicable tips for presentations – both oral and posters. While academia is certainly a distinct communication genre with its own merit, and conference talks should not aim to be TED talks or advertising campaigns, there are some key rhetorical and visual strategies that would improve most academic presentations. After all, your goal is to convince your audience that your work is trustworthy, thorough, and above all interesting, so anything that might make that conclusion easier for them is a win for you.

PRESENTING YOUR WORK WITH TALKS AND SLIDES

Structure is everything, and the general rule is to keep the introduction to taking up 10% of your speech, content 80%, and conclusion 10%. The next general rule is to relate everything you include in your speech structure to one single purpose. That does not mean you can not tell stories about your data or your experiments, but only if they contribute to exemplifying the point you are trying to make.

As an academic, you are lucky enough to have already written the content you are trying to communicate – but your presentation should not just be a summary of your paper. It should be better – more appetizing – you have the time to focus on the really juicy parts of your results. Unless related work is literally a cornerstone of your contribution – is it necessary for your point? Ask for every single piece of information you put in your talk: would the talk suffer if I took this out? What does it contribute to the purpose? And is there a way I could amplify its contribution to the point – even if this means repeating why you are including this information to your audience.

Your first step is to decide which one core message you would like the audience to leave your talk with. If they forget everything else, what is the one sentence you want them to remember (except from “cite my work”)? It’s probably in your conclusion somewhere, but you might find it in the discussion, the results or even your research question. Once you have decided what your main purpose of the talk is, you can start building your talk around it. Here are 5 applicable tips for how to get your message across in a confident, convincing way:

1. Use crescendo. Keep the audience’s attention throughout your speech by building to a climax, rather than peaking too soon. Conference talks are quite short, and this works to your advantage. The audience barely has time to get bored. If you peak their interest early, they barely have time to fade away before your point can be made (NB: starting off with presented related work is not peaking your audience’s interest!). Once you have made it to your point, end quickly. For a short example of a talk that does this brilliantly, I refer you to this TED-talk “How to start a movement” by Derek Sivers (https://www.ted.com/talks/derek_sivers_how_to_start_a_movement). In this talk, Derek Sivers manages to tell his story, exemplified by a video recording in real time, and it works perfectly as a crescendo building the audience’s interest in “What on earth is this going to lead to?”. Once the video has ended, he recaps his points using no slides, ending quickly thereafter.

2. Pick a narrative structure. This will help your speech to be more memorable to your audience. Here are 3 of the most common speech narratives (there are many forms, but these are applicable to most academic presentations):

The Tower Structure: This is how many academic talks are structured. You use bits and pieces of information (which are interesting to the audience) to build your argument. Once you have finished this structure, you can show the audience the power of the totality of the argument you have created.

Mystery Structure: The mystery structure is about presenting a problem or question to your audience that they are desperate to know the answer to. You want to keep them in the dark throughout your talk for this structure, not revealing the answer until the very end. You might present hints and clues to the solution along the way, including the audience in your journey towards your crucial message.

Ping Pong Structure: The ping pong structure is ideal if you expect the audience to contradict your point. In this structure you present both sides of the argument, one after another, in such a way that the audience can follow both sides and stay curious about which side ultimately wins.

3. Consider adding pauses rather than additional information. Pauses are as powerful as white space in posters. If you have noticed, almost all TED talks that use slides work with “break slides” – deliberately empty slides that leave the screen black and forces the audience’s attention back to you. If you are building your argument and want to add that little extra weight on a sentence, consider either using a 4-5 second long pause after your sentence (5 seconds can feel like an eternity when you are presenting, but to the audience it is just enough time to let the argument sink in) – perhaps even repeat the argument again in a slightly different way after your pause. You might want to consider leaving your screen without slides for the introduction of your talk, to make sure you have the audience’s undivided attention, or leaving some slides black when you want to explain something technical that needs audience concentration.

4. Support with slides, don’t explain. That brings us to a little discussion about slides. Slides can be fantastic and they can be awful. They can support and they can distract, depending on how they are used. You have probably heard about keeping your slides to only bullets before. It’s still true. But it doesn’t necessarily solve anything – as it turns out, bullets can be just as long and text heavy as normal sentences. Do you actually need slides to present your work? I would like to pose that you don’t – unless you have visual material that highly underpins your crucial point. Models, images, graphs. Other than that, slides should not really be necessary. If you would like to use slides, use them to support the audience, not to support you. That’s what your notes are for. There is nothing wrong with writing your key message on a slide so your peers can photograph and tweet it, but then think about: what would your peers consider worth tweeting? And make it fun – don’t be afraid to add living images or videos (check the sound though), especially of your data. It is always fun to see the data – even if your data is a program, consider doing a screencast of it running and adding that as a silent video in the background while you explain what is cool about it.

5. Stay enthusiastic. If you do not think your results are fun and interesting, chances are your audience won’t either. Try your very best to identify exactly what made you interested in this problem originally, and convey that to your audience. Sometimes that involves explaining how your results might be used in the future – I have sometimes taken the liberty to add slides that were just called “Imagine a thing that …” and used that to explain how my results could be transformed into systems that would change the world. Sometimes illustrated by less than perfect stick figure illustrations – but the point here is not to show off as a designer, but to show the audience that I really, really want to tell them how fantastic this system could be – even if I couldn’t draw it very well.

HOW TO PRESENT WITH POSTERS

The number one mistake academic posters make is cramming in too much information on a piece of paper. Depending on how your (published) paper is written, your poster often does not need more information than is in the conclusion section:

- What is the research question(s)?

- How have you engaged with your data (type of study, experiment, etc.)?

- What are your results?

- What are the implications of your results?

- Authors, affiliation, funding.

That’s it. Most of these should be explainable in 1-3 bullet points of one-two sentences each, not much more. If your poster is based on a paper, you can print out copies of the paper and keep those next to the poster, so that interested parties can grab one.

Layout

People from left to right language systems read from left to right and top to bottom, which is how you have to help them digest the information you would like to present. Clearly defined boxes makes it easier for the eye to navigate the poster, especially in a horizontal (landscape) layout. Boxes do not have to be defined by borders (in fact, if you do not know what you are doing, I highly advise against using borders), but can be created by background colors or even white space. When you design posters, white space or negative space should be your new best friend – at least 40% of the poster should be free of text. This will make the text that did make the cut come much more into focus.

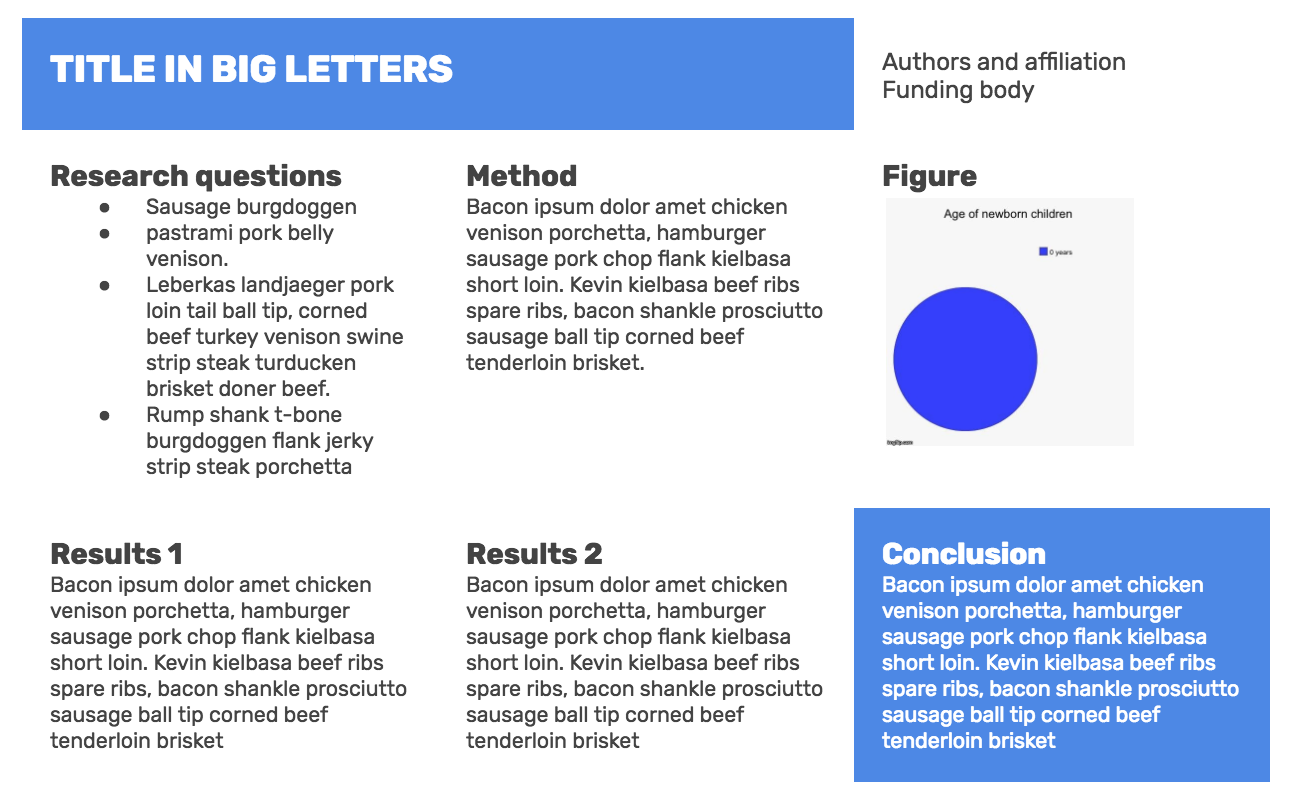

Consider highlighting your most interesting points with a colored box and white text, as for the title and conclusion below (notice how the other text seems “boxed” by white space but without actually having borders):

In my opinion, if you are not using a proper layout program (like Adobe InDesign), don’t be afraid of using tables to help you get that alignment right. It is much better to keep the layout conservative and maintain proper alignment than it is to experiment too much and end up with boxes that are a couple of centimeters off because PowerPoint just wants to watch the world burn. In the image above, I have just created one big table with three vertical columns, merged the two top left cells and, most importantly, adjusted the cell padding (the space, in pixels, between the cell wall and the cell content) to be high, thus automatically getting that nice white space.

In my opinion, if you are not using a proper layout program (like Adobe InDesign), don’t be afraid of using tables to help you get that alignment right. It is much better to keep the layout conservative and maintain proper alignment than it is to experiment too much and end up with boxes that are a couple of centimeters off because PowerPoint just wants to watch the world burn. In the image above, I have just created one big table with three vertical columns, merged the two top left cells and, most importantly, adjusted the cell padding (the space, in pixels, between the cell wall and the cell content) to be high, thus automatically getting that nice white space.

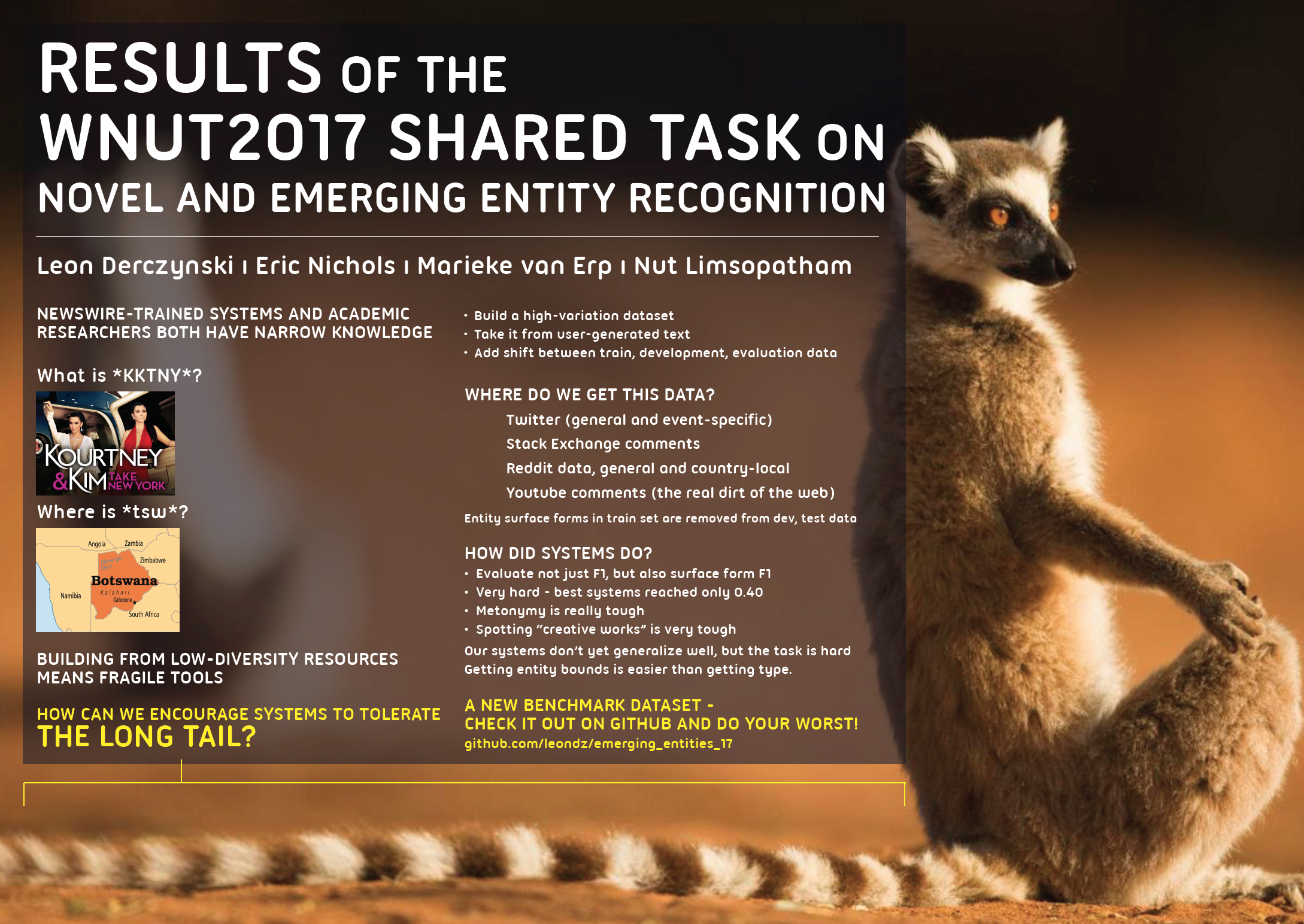

Finally, don’t underestimate the power of an attention-grabbing poster. In the poster below, the concept of “long tail” was translated into a lemur, giving an opportunity to use a really big picture of a cute animal. Cheap trick as it might be, it actually does attract people’s attention, and if you are hanging out by your poster for a couple of hours anyway, you might as well not do it alone.

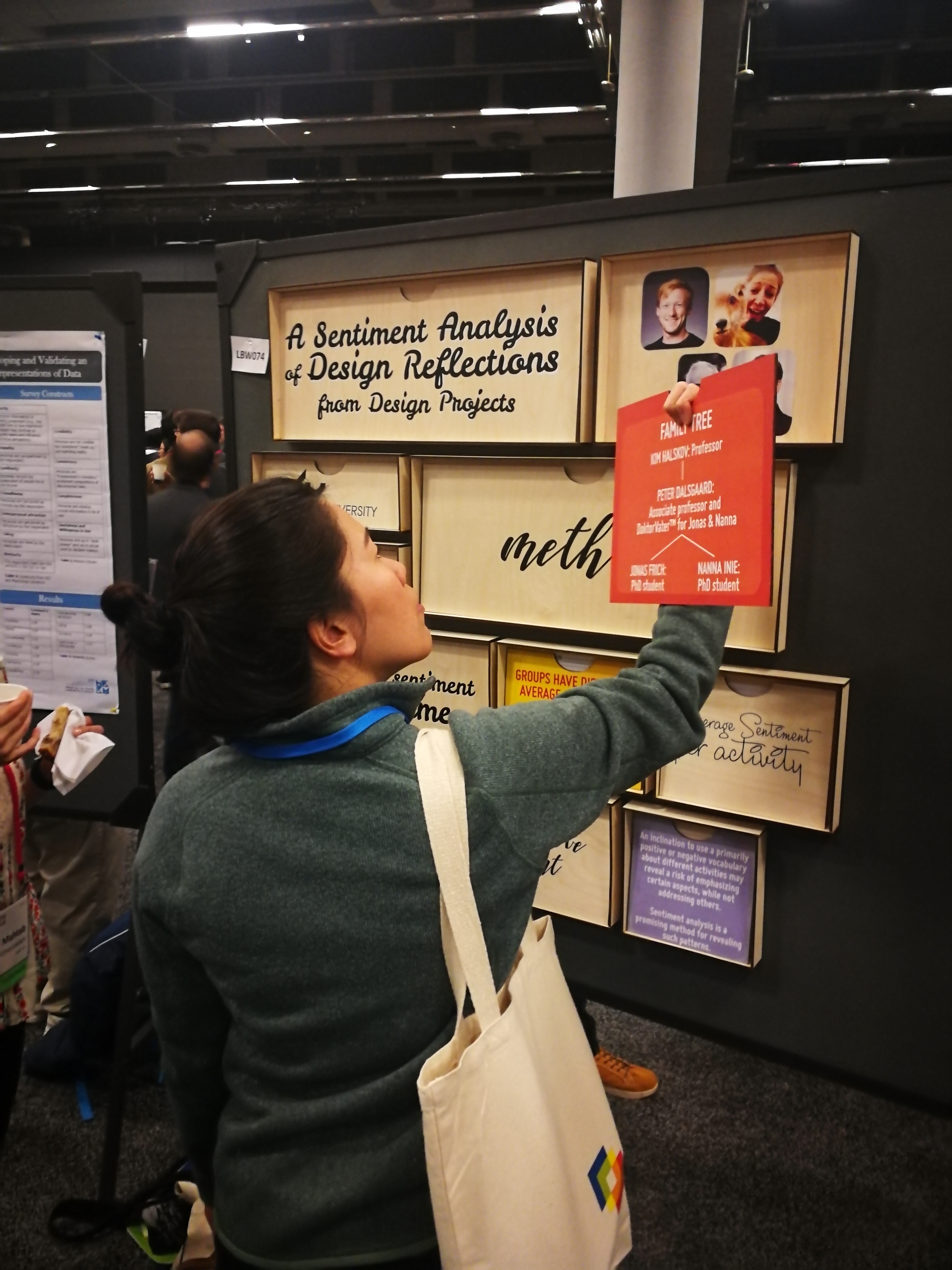

Other techniques can make a poster attractive. There two below are made of wood; the first is interactive, and one should turn round sections of it to reveal information. The second can be rearranged, connected with velcro – but the novel medium is enticing in itself.

Graphic resources

If you wish to upgrade the visuals of your poster, I highly recommend looking into three elements: images, icons, and fonts. I’ve added three resources for these that are free and easy to use:

Photos: https://www.pexels.com/

Gorgeous, free stock photos that will make your slides or posters so much more appealing

Icons: Looking for simpler illustrations? https://www.flaticon.com/categories is your friend!

Fonts: Sans-serif is generally better looking on screens and for headlines. https://www.fontsquirrel.com/ has many beautiful, free fonts – if you just need something that works quickly, you can’t go too wrong with Open Sans, Helvetica Neue or Rubik – bold for headlines and light or normal for body text.

Best of luck for your presentation!

Nanna Inie is finishing up a PhD in Digital Design at Aarhus University. As well as being founder of the largest TEDx event in Denmark, Nanna has previously been acclaimed with audience favorite poster at CHI during a stay at the UCSD Design Lab, and owns a video production company.

Nanna Inie is finishing up a PhD in Digital Design at Aarhus University. As well as being founder of the largest TEDx event in Denmark, Nanna has previously been acclaimed with audience favorite poster at CHI during a stay at the UCSD Design Lab, and owns a video production company.

COLING 2018 Accepted Papers

Here is the list of papers accepted at COLING 2018, to appear in Santa Fe. This list was delayed until the best paper process was completed, to make sure that these awards were selected without committee members being able to know the identity of paper authors.

Congratulations to all authors of accepted papers; we look forward to seeing you in New Mexico!

- A Comparison of Transformer and Recurrent Neural Networks on Multilingual Neural Machine Translation – Surafel Melaku Lakew, Mauro Cettolo and Marcello Federico.

- A Computational Model for the Linguistic Notion of Morphological Paradigm – Miikka Silfverberg, Ling Liu and Mans Hulden.

- A Knowledge-Augmented Neural Network Model for Implicit Discourse Relation Classification – Yudai Kishimoto, Yugo Murawaki and Sadao Kurohashi.

- A Lexicon-Based Supervised Attention Model for Neural Sentiment Analysis – Yicheng Zou, Tao Gui, Qi Zhang and Xuanjing Huang.

- A Multi-Attention based Neural Network with External Knowledge for Story Ending Predicting Task – Qian Li, Ziwei Li, Jin-Mao Wei, Yanhui Gu, Adam Jatowt and Zhenglu Yang.

- A New Approach to Animacy Detection – Labiba Jahan, Geeticka Chauhan and Mark Finlayson.

- A New Concept of Deep Reinforcement Learning based Augmented General Tagging System – Yu Wang, Abhishek Patel and Hongxia Jin.

- A Position-aware Bidirectional Attention Network for Aspect-level Sentiment Analysis – Shuqin Gu, Lipeng Zhang, Yuexian Hou and Yin Song.

- A Practical Incremental Learning Framework For Sparse Entity Extraction – Hussein Al-Olimat, Steven Gustafson, Jason Mackay, Krishnaprasad Thirunarayan and Amit Sheth.

- A Prospective-Performance Network to Alleviate Myopia in Beam Search for Response Generation – Zongsheng Wang, Yunzhi Bai, Bowen Wu, Zhen Xu, Zhuoran Wang and Baoxun Wang.

- A Reinforcement Learning Framework for Natural Question Generation using Bi-discriminators – Zhihao Fan, Zhongyu Wei, Siyuan Wang, Yang Liu and Xuanjing Huang.

- A Retrospective Analysis of the Fake News Challenge Stance-Detection Task – Andreas Hanselowski, Avinesh PVS, Benjamin Schiller, Felix Caspelherr, Debanjan Chaudhuri, Christian M. Meyer and Iryna Gurevych.

- Ab Initio: Automatic Latin Proto-word Reconstruction – Alina Maria Ciobanu and Liviu P. Dinu.

- Abstract Meaning Representation for Multi-Document Summarization – Kexin Liao, Logan Lebanoff and Fei Liu.

- Abstractive Unsupervised Multi-Document Summarization using Paraphrastic Sentence Fusion – Mir Tafseer Nayeem, Tanvir Ahmed Fuad and Yllias Chali.

- Adopting the Word-Pair-Dependency-Triplets with Individual Comparison for Natural Language Inference – Qianlong Du, Chengqing Zong and Keh-Yih Su.

- Adversarial Domain Adaptation for Variational Neural Language Generation in Dialogue Systems – Van-Khanh Tran and Le-Minh Nguyen.

- Adversarial Multi-lingual Neural Relation Extraction – Xiaozhi Wang, Xu Han, Yankai Lin, Zhiyuan Liu and Maosong Sun.

- Aff2Vec: Affect–Enriched Distributional Word Representations – Sopan Khosla, Niyati Chhaya and Kushal Chawla.

- All-in-one: Multi-task Learning for Rumour Verification – Elena Kochkina, Maria Liakata and Arkaitz Zubiaga.

- An Attribute Enhanced Domain Adaptive Model for Cold-Start Spam Review Detection – Zhenni You, Tieyun Qian and Bing Liu.

- An Empirical Study on Fine-Grained Named Entity Recognition – Khai Mai, Thai-Hoang Pham, Minh Trung Nguyen, Nguyen Tuan Duc, Danushka Bollegala, Ryohei Sasano and Satoshi Sekine.

- An Exploration of Three Lightly-supervised Representation Learning Approaches for Named Entity Classification – Ajay Nagesh and Mihai Surdeanu.

- AnlamVer: Semantic Model Evaluation Dataset for Turkish – Word Similarity and Relatedness – Gökhan Ercan and Olcay Taner Yıldız.

- Answerable or Not: Devising a Dataset for Extending Machine Reading Comprehension – Mao Nakanishi, Tetsunori Kobayashi and Yoshihiko Hayashi.

- Ask No More: Deciding when to guess in referential visual dialogue – RAVI SHEKHAR, Tim Baumgärtner, Aashish Venkatesh, Elia Bruni, Raffaella Bernardi and Raquel Fernández.

- Aspect and Sentiment Aware Abstractive Review Summarization – Min Yang, Qiang Qu, Ying Shen, Qiao Liu, Wei Zhao and Jia Zhu.

- Aspect-based summarization of pros and cons in unstructured product reviews – Florian Kunneman, Sander Wubben, Antal van den Bosch and Emiel Krahmer.

- Assessing Composition in Sentence Vector Representations – Allyson Ettinger, Ahmed Elgohary, Colin Phillips and Philip Resnik.

- Attending Sentences to detect Satirical Fake News – Sohan De Sarkar, Fan Yang and Arjun Mukherjee.

- Authorless Topic Models: Biasing Models Away from Known Structure – Laure Thompson and David Mimno.

- Authorship Attribution By Consensus Among Multiple Features – Jagadeesh Patchala and Raj Bhatnagar.

- Automated Fact Checking: Task Formulations, Methods and Future Directions – James Thorne and Andreas Vlachos.

- Automated Scoring: Beyond Natural Language Processing – Nitin Madnani and Aoife Cahill.

- Automatic Detection of Fake News – Verónica Pérez-Rosas, Bennett Kleinberg, Alexandra Lefevre and Rada Mihalcea.

- Bridge Video and Text with Cascade Syntactic Structure – Guolong Wang, Zheng Qin, Kaiping Xu, Kai Huang and Shuxiong Ye.

- Bringing replication and reproduction together with generalisability in NLP: Three reproduction studies for Target Dependent Sentiment Analysis – Andrew Moore and Paul Rayson.

- Can Rumour Stance Alone Predict Veracity? – Sebastian Dungs, Ahmet Aker, Norbert Fuhr and Kalina Bontcheva.

- CASCADE: Contextual Sarcasm Detection in Online Discussion Forums – Devamanyu Hazarika, Soujanya Poria, Sruthi Gorantla, Erik Cambria, Roger Zimmermann and Rada Mihalcea.

- Challenges and Opportunities of Applying Natural Language Processing in Business Process Management – Han Van der Aa, Josep Carmona, Henrik Leopold, Jan Mendling and Lluís Padró.

- Challenges of language technologies for the indigenous languages of the Americas – Manuel Mager, Ximena Gutierrez-Vasques, Gerardo Sierra and Ivan Meza-Ruiz.

- Context-Sensitive Generation of Open-Domain Conversational Responses – Wei-Nan Zhang, Yiming Cui, Yifa Wang, Qingfu Zhu, Lingzhi Li, Lianqiang Zhou and Ting Liu.

- Contextual String Embeddings for Sequence Labeling – Alan Akbik, Duncan Blythe and Roland Vollgraf.

- Cooperative Denoising for Distantly Supervised Relation Extraction – Kai Lei, Daoyuan Chen, Yaliang Li, Nan Du, Min Yang, Wei Fan and Ying Shen.

- Cross-lingual Argumentation Mining: Machine Translation (and a bit of Projection) is All You Need! – Steffen Eger, Johannes Daxenberger, Christian Stab and Iryna Gurevych.

- Deep Enhanced Representation for Implicit Discourse Relation Recognition – Hongxiao Bai and Hai Zhao.

- Dependent Gated Reading for Cloze-Style Question Answering – Reza Ghaeini, Xiaoli Fern, Hamed Shahbazi and Prasad Tadepalli.

- Design Challenges and Misconceptions in Neural Sequence Labeling – Jie Yang, Shuailong Liang and Yue Zhang.

- Design Challenges in Named Entity Transliteration – Yuval Merhav and Stephen Ash.

- Dialogue-act-driven Conversation Model : An Experimental Study – Harshit Kumar, Arvind Agarwal and Sachindra Joshi.

- Distance-Free Modeling of Multi-Predicate Interactions in End-to-End Japanese Predicate-Argument Structure Analysis – Yuichiroh Matsubayashi and Kentaro Inui.

- Distinguishing affixoid formations from compounds – Josef Ruppenhofer, Michael Wiegand, Rebecca Wilm and Katja Markert.

- Does Higher Order LSTM Have Better Accuracy for Segmenting and Labeling Sequence Data? – Yi Zhang, Xu SUN, Shuming Ma, Yang Yang and Xuancheng Ren.

- Dynamic Multi-Level Multi-Task Learning for Sentence Simplification – Han Guo, Ramakanth Pasunuru and Mohit Bansal.

- Effective Attention Modeling for Aspect-Level Sentiment Classification – Ruidan He, Wee Sun Lee, Hwee Tou Ng and Daniel Dahlmeier.

- Embedding Words as Distributions with a Bayesian Skip-gram Model – Arthur Bražinskas, Serhii Havrylov and Ivan Titov.

- Emotion Detection and Classification in a Multigenre Corpus with Joint Multi-Task Deep Learning – Shabnam Tafreshi and Mona Diab.

- Emotion Representation Mapping for Automatic Lexicon Construction (Mostly) Performs on Human Level – Sven Buechel and Udo Hahn.

- Employing Text Matching Network to Recognise Nuclearity in Chinese Discourse – Sheng Xu, Peifeng Li, Guodong Zhou and Qiaoming Zhu.

- Enhanced Aspect Level Sentiment Classification with Auxiliary Memory – Peisong Zhu and Tieyun Qian.

- Enhancing Sentence Embedding with Generalized Pooling – Qian Chen, Zhen-Hua Ling and Xiaodan Zhu.

- Exploiting Structure in Representation of Named Entities using Active Learning – Nikita Bhutani, Kun Qian, Yunyao Li, H. V. Jagadish, Mauricio Hernandez and Mitesh Vasa.

- Exploiting Syntactic Structures for Humor Recognition – Lizhen Liu, Donghai Zhang and Wei Song.

- Exploratory Neural Relation Classification for Domain Knowledge Acquisition – Yan Fan, Chengyu Wang and Xiaofeng He.

- Exploring the Influence of Spelling Errors on Lexical Variation Measures – Ryo Nagata, Taisei Sato and Hiroya Takamura.

- Expressively vulgar: The socio-dynamics of vulgarity and its effects on sentiment analysis in social media – Isabel Cachola, Eric Holgate, Daniel Preoţiuc-Pietro and Junyi Jessy Li.

- Extracting Parallel Sentences with Bidirectional Recurrent Neural Networks to Improve Machine Translation – Francis Grégoire and Philippe Langlais.

- Extractive Headline Generation Based on Learning to Rank for Community Question Answering – Tatsuru Higurashi, Hayato Kobayashi, Takeshi Masuyama and Kazuma Murao.

- Folksonomication: Predicting Tags for Movies from Plot Synopses using Emotion Flow Encoded Neural Network – Sudipta Kar, Suraj Maharjan and Thamar Solorio.

- From Text to Lexicon: Bridging the Gap between Word Embeddings and Lexical Resources – Ilia Kuznetsov and Iryna Gurevych.

- Fusing Recency into Neural Machine Translation with an Inter-Sentence Gate Model – Shaohui Kuang and Deyi Xiong.

- GenSense: A Generalized Sense Retrofitting Model – Yang-Yin Lee, Ting-Yu Yen, Hen-Hsen Huang, Yow-Ting Shiue and Hsin-Hsi Chen.

- Graphene: Semantically-Linked Propositions in Open Information Extraction – Matthias Cetto, Christina Niklaus, André Freitas and Siegfried Handschuh.

- Grounded Textual Entailment – Hoa Vu, Claudio Greco, Aliia Erofeeva, Somayeh Jafaritazehjan, Guido Linders, Marc Tanti, Alberto Testoni, Raffaella Bernardi and Albert Gatt.

- How emotional are you? Neural Architectures for Emotion Intensity Prediction in Microblogs – Devang Kulshreshtha, Pranav Goel and Anil Kumar Singh.

- Hybrid Attention based Multimodal Network for Spoken Language Classification – Yue Gu, Kangning Yang, Shiyu Fu, Shuhong Chen, Xinyu Li and Ivan Marsic.

- Implicit Discourse Relation Recognition using Neural Tensor Network with Interactive Attention and Sparse Learning – Fengyu Guo, Ruifang He, Di Jin, Jianwu Dang, Longbiao Wang and Xiangang Li.

- Improving Neural Machine Translation by Incorporating Hierarchical Subword Features – Makoto Morishita, Jun Suzuki and Masaaki Nagata.

- Integrating Question Classification and Deep Learning for improved Answer Selection – Harish Tayyar Madabushi, Mark Lee and John Barnden.

- Investigating Productive and Receptive Knowledge: A Profile for Second Language Learning – Leonardo Zilio, Rodrigo Wilkens and Cédrick Fairon.

- Joint Modeling of Structure Identification and Nuclearity Recognition in Macro Chinese Discourse Treebank – Xiaomin Chu, Feng Jiang, Yi Zhou, Guodong Zhou and Qiaoming Zhu.

- Knowledge as A Bridge: Improving Cross-domain Answer Selection with External Knowledge – Yang Deng, Ying Shen, Min Yang, Yaliang Li, Nan Du, Wei Fan and Kai Lei.

- Learning Features from Co-occurrences: A Theoretical Analysis – Yanpeng Li.

- Learning from Measurements in Crowdsourcing Models: Inferring Ground Truth from Diverse Annotation Types – Paul Felt, Eric Ringger, Kevin Seppi and Jordan Boyd-Graber.

- Learning Sentiment Composition from Sentiment Lexicons – Orith Toledo-Ronen, Roy Bar-Haim, Alon Halfon, Charles Jochim, Amir Menczel, Ranit Aharonov and Noam Slonim.

- Learning Target-Specific Representations of Financial News Documents For Cumulative Abnormal Return Prediction – Junwen Duan, Yue Zhang, Xiao Ding, Ching-Yun Chang and Ting Liu.

- Learning to Generate Word Representations using Subword Information – Yeachan Kim, Kang-Min Kim, Ji-Min Lee and SangKeun Lee.

- Learning Word Meta-Embeddings by Autoencoding – Danushka Bollegala and Cong Bao.

- Low-resource Cross-lingual Event Type Detection via Distant Supervision with Minimal Effort – Aldrian Obaja Muis, Naoki Otani, Nidhi Vyas, Ruochen Xu, Yiming Yang, Teruko Mitamura and Eduard Hovy.

- Lyrics Segmentation: Textual Macrostructure Detection using Convolutions – Michael Fell, Yaroslav Nechaev, Elena Cabrio and Fabien Gandon.

- Measuring the Diversity of Automatic Image Descriptions – Emiel van Miltenburg, Desmond Elliott and Piek Vossen.

- Model-Free Context-Aware Word Composition – Bo An, Xianpei Han and Le Sun.

- Modeling Coherence for Neural Machine Translation with Dynamic and Topic Caches – Shaohui Kuang, Deyi Xiong, Weihua Luo and Guodong Zhou.

- Modeling Semantics with Gated Graph Neural Networks for Knowledge Base Question Answering – Daniil Sorokin and Iryna Gurevych.

- Modeling with Recurrent Neural Networks for Open Vocabulary Slots – Jun-Seong Kim, Junghoe Kim, SeungUn Park, Kwangyong Lee and Yoonju Lee.

- Multilevel Heuristics for Rationale-Based Entity Relation Classification in Sentences – Shiou Tian Hsu, Mandar Chaudhary and Nagiza Samatova.

- Multilingual Neural Machine Translation with Task-Specific Attention – Graeme Blackwood, Miguel Ballesteros and Todd Ward.

- Multimodal Grounding for Language Processing – Lisa Beinborn, Teresa Botschen and Iryna Gurevych.

- Neural Activation Semantic Models: Computational lexical semantic models of localized neural activations – Nikos Athanasiou, Elias Iosif and Alexandros Potamianos.

- Neural Collective Entity Linking – Yixin Cao, Lei Hou, Juanzi Li and Zhiyuan Liu.

- Neural Machine Translation with Decoding History Enhanced Attention – Mingxuan Wang.

- Neural Network Models for Paraphrase Identification, Semantic Textual Similarity, Natural Language Inference, and Question Answering – Wuwei Lan and Wei Xu.

- Neural Relation Classification with Text Descriptions – Feiliang Ren, Di Zhou, Zhihui Liu, Yongcheng Li, Rongsheng Zhao, Yongkang Liu and Xiaobo Liang.

- Neural Transition-based String Transduction for Limited-Resource Setting in Morphology – Peter Makarov and Simon Clematide.

- Novelty Goes Deep. A Deep Neural Solution To Document Level Novelty Detection – Tirthankar Ghosal, Vignesh Edithal, Asif Ekbal, Pushpak Bhattacharyya, Srinivasa Satya Sameer Kumar Chivukula and George Tsatsaronis.

- On Adversarial Examples for Character-Level Neural Machine Translation – Javid Ebrahimi, Daniel Lowd and Dejing Dou.

- One-shot Learning for Question-Answering in Gaokao History Challenge – Zhuosheng Zhang and Hai Zhao.

- Open Information Extraction from Conjunctive Sentences – Swarnadeep Saha and Mausam -.

- Open Information Extraction on Scientific Text: An Evaluation – Paul Groth, Mike Lauruhn, Antony Scerri and Ron Daniel, Jr..

- Pattern-revising Enhanced Simple Question Answering over Knowledge Bases – Yanchao Hao, Hao Liu, Shizhu He, Kang Liu and Jun Zhao.

- Personalized Text Retrieval for Learners of Chinese as a Foreign Language – Chak Yan Yeung and John Lee.

- Predicting Stances from Social Media Posts using Factorization Machines – Akira Sasaki, Kazuaki Hanawa, Naoaki Okazaki and Kentaro Inui.

- Punctuation as Native Language Interference – Ilia Markov, Vivi Nastase and Carlo Strapparava.

- Quantifying training challenges of dependency parsers – Lauriane Aufrant, Guillaume Wisniewski and François Yvon.

- Recognizing Humour using Word Associations and Humour Anchor Extraction – Andrew Cattle and Xiaojuan Ma.

- Recurrent One-Hop Predictions for Reasoning over Knowledge Graphs – Wenpeng Yin, Yadollah Yaghoobzadeh and Hinrich Schütze.

- Relation Induction in Word Embeddings Revisited – Zied Bouraoui, Shoaib Jameel and Steven Schockaert.

- Representations and Architectures in Neural Sentiment Analysis for Morphologically Rich Languages: A Case Study from Modern Hebrew – Adam Amram, Anat Ben-David and Reut Tsarfaty.

- Rethinking the Agreement in Human Evaluation Tasks – Jacopo Amidei, Paul Piwek and Alistair Willis.

- RNN Simulations of Grammaticality Judgments on Long-distance Dependencies – Shammur Absar Chowdhury and Roberto Zamparelli.

- Self-Normalization Properties of Language Modeling – Jacob Goldberger and Oren Melamud.

- Semi-Supervised Disfluency Detection – Feng Wang, Zhen Yang, Wei Chen, Shuang Xu, Bo Xu and Qianqian Dong.

- Semi-Supervised Lexicon Learning for Wide-Coverage Semantic Parsing – Bo Chen, Bo An, Le Sun and Xianpei Han.

- Sequence-to-Sequence Data Augmentation for Dialogue Language Understanding – Yutai Hou, Yijia Liu, Wanxiang Che and Ting Liu.

- SGM: Sequence Generation Model for Multi-label Classification – Pengcheng Yang, Xu SUN, Wei Li, Shuming Ma, Wei Wu and Houfeng WANG.

- Simple Algorithms For Sentiment Analysis On Sentiment Rich, Data Poor Domains. – Prathusha K Sarma and William Sethares.

- Sprucing up the trees – Error detection in treebanks – Ines Rehbein and Josef Ruppenhofer.

- Stress Test Evaluation for Natural Language Inference – Aakanksha Naik, Abhilasha Ravichander, Norman Sadeh, Carolyn Rose and Graham Neubig.

- Structure-Infused Copy Mechanisms for Abstractive Summarization – Kaiqiang Song, Lin Zhao and Fei Liu.

- Structured Dialogue Policy with Graph Neural Networks – Lu Chen, Bowen Tan, Sishan Long and Kai Yu.

- Subword-augmented Embedding for Cloze Reading Comprehension – Zhuosheng Zhang, Yafang Huang and Hai Zhao.

- Systematic Study of Long Tail Phenomena in Entity Linking – Filip Ilievski, Piek Vossen and Stefan Schlobach.

- The Road to Success: Assessing the Fate of Linguistic Innovations in Online Communities – Marco Del Tredici and Raquel Fernández.

- They Exist! Introducing Plural Mentions to Coreference Resolution and Entity Linking – Ethan Zhou and Jinho D. Choi.

- Topic or Style? Exploring the Most Useful Features for Authorship Attribution – Yunita Sari, Mark Stevenson and Andreas Vlachos.

- Towards a unified framework for bilingual terminology extraction of single-word and multi-word terms – Jingshu Liu, Emmanuel Morin and Peña Saldarriaga.

- Towards identifying the optimal datasize for lexically-based Bayesian inference of linguistic phylogenies – Taraka Rama and Søren Wichmann.

- Transition-based Neural RST Parsing with Implicit Syntax Features – Nan Yu, Meishan Zhang and Guohong Fu.

- Treat us like the sequences we are: Prepositional Paraphrasing of Noun Compounds using LSTM – Girishkumar Ponkiya, Kevin Patel, Pushpak Bhattacharyya and Girish Palshikar.

- Triad-based Neural Network for Coreference Resolution – Yuanliang Meng and Anna Rumshisky.

- Two Local Models for Neural Constituent Parsing – Zhiyang Teng and Yue Zhang.

- Unsupervised Morphology Learning with Statistical Paradigms – Hongzhi Xu, Mitchell Marcus, Charles Yang and Lyle Ungar.

- Using J-K-fold Cross Validation To Reduce Variance When Tuning NLP Models – Henry Moss, David Leslie and Paul Rayson.

- Variational Attention for Sequence-to-Sequence Models – Hareesh Bahuleyan, Lili Mou, Olga Vechtomova and Pascal Poupart.

- What represents “style” in authorship attribution? – Kalaivani Sundararajan and Damon Woodard.

- Who is Killed by Police: Introducing Supervised Attention for Hierarchical LSTMs – Minh Nguyen and Thien Nguyen.

- Word-Level Loss Extensions for Neural Temporal Relation Classification – Artuur Leeuwenberg and Marie-Francine Moens.

- Zero Pronoun Resolution with Attention-based Neural Network – Qingyu Yin, Yu Zhang, Wei-Nan Zhang, Ting Liu and William Yang Wang.

- A Dataset for Building Code-Mixed Goal Oriented Conversation Systems – Suman Banerjee, Nikita Moghe, Siddhartha Arora and Mitesh M. Khapra.

- A Deep Dive into Word Sense Disambiguation with LSTM – Minh Le, Marten Postma, Jacopo Urbani and Piek Vossen.

- A Full End-to-End Semantic Role Labeler, Syntactic-agnostic Over Syntactic-aware? – Jiaxun Cai, Shexia He, Zuchao Li and Hai Zhao.

- A LSTM Approach with Sub-Word Embeddings for Mongolian Phrase Break Prediction – Rui Liu, Feilong Bao, Guanglai Gao, Hui Zhang and Yonghe Wang.

- A Neural Question Answering Model Based on Semi-Structured Tables – Hao Wang, Xiaodong Zhang, Shuming Ma, Xu SUN, Houfeng WANG and wang mengxiang.

- A Nontrivial Sentence Corpus for the Task of Sentence Readability Assessment in Portuguese – Sidney Evaldo Leal, Magali Sanches Duran and Sandra Maria Aluísio.

- A Pseudo Label based Dataless Naive Bayes Algorithm for Text Classification with Seed Words – Ximing Li and Bo Yang.

- A Reassessment of Reference-Based Grammatical Error Correction Metrics – Shamil Chollampatt and Hwee Tou Ng.

- A review of Spanish corpora annotated with negation – Salud María Jiménez-Zafra, Roser Morante, Maite Martin and L. Alfonso Urena Lopez.

- A Review on Deep Learning Techniques Applied to Answer Selection – Tuan Manh Lai, Trung Bui and Sheng Li.

- A Survey of Domain Adaptation for Neural Machine Translation – Chenhui Chu and Rui Wang.

- A Survey on Open Information Extraction – Christina Niklaus, Matthias Cetto, André Freitas and Siegfried Handschuh.

- A Survey on Recent Advances in Named Entity Recognition from Deep Learning models – Vikas Yadav and Steven Bethard.

- Adaptive Learning of Local Semantic and Global Structure Representations for Text Classification – Jianyu Zhao, Zhiqiang Zhan, Qichuan Yang, Yang Zhang, Changjian Hu, Zhensheng Li, Liuxin Zhang and Zhiqiang He.

- Adaptive Multi-Task Transfer Learning for Chinese Word Segmentation in Medical Text – Junjie Xing, Kenny Zhu and Shaodian Zhang.

- Adaptive Weighting for Neural Machine Translation – Yachao Li, Junhui Li and Min Zhang.

- Addressee and Response Selection for Multilingual Conversation – Motoki Sato, Hiroki Ouchi and Yuta Tsuboi.

- Adversarial Feature Adaptation for Cross-lingual Relation Classification – Bowei Zou, Zengzhuang Xu, Yu Hong and Guodong Zhou.

- AMR Beyond the Sentence: the Multi-sentence AMR corpus – Tim O’Gorman, Michael Regan, Kira Griffitt, Martha Palmer, Ulf Hermjakob and Kevin Knight.

- An Analysis of Annotated Corpora for Emotion Classification in Text – Laura Ana Maria Bostan and Roman Klinger.

- An Empirical Investigation of Error Types in Vietnamese Parsing – Quy Nguyen, Yusuke Miyao, Hiroshi Noji and Nhung Nguyen.

- An Evaluation of Neural Machine Translation Models on Historical Spelling Normalization – Gongbo Tang, Fabienne Cap, Eva Pettersson and Joakim Nivre.

- An Interpretable Reasoning Network for Multi-Relation Question Answering – Mantong Zhou, Minlie Huang and xiaoyan zhu.

- An Operation Network for Abstractive Sentence Compression – Naitong Yu, Jie Zhang, Minlie Huang and xiaoyan zhu.

- Ant Colony System for Multi-Document Summarization – Asma Al-Saleh and Mohamed El Bachir Menai.

- Argumentation Synthesis following Rhetorical Strategies – Henning Wachsmuth, Manfred Stede, Roxanne El Baff, Khalid Al Khatib, Maria Skeppstedt and Benno Stein.

- Arguments and Adjuncts in Universal Dependencies – Adam Przepiórkowski and Agnieszka Patejuk.

- Arrows are the Verbs of Diagrams – Malihe Alikhani and Matthew Stone.

- Assessing Quality Estimation Models for Sentence-Level Prediction – Hoang Cuong and Jia Xu.

- Attributed and Predictive Entity Embedding for Fine-Grained Entity Typing in Knowledge Bases – Hailong Jin, Lei Hou, Juanzi Li and Tiansi Dong.

- Author Profiling for Abuse Detection – Pushkar Mishra, Marco Del Tredici, Helen Yannakoudakis and Ekaterina Shutova.

- Authorship Identification for Literary Book Recommendations – Haifa Alharthi, Diana Inkpen and Stan Szpakowicz.

- Automatic Assessment of Conceptual Text Complexity Using Knowledge Graphs – Sanja Štajner and Ioana Hulpus.

- Automatically Creating a Lexicon of Verbal Polarity Shifters: Mono- and Cross-lingual Methods for German – Marc Schulder, Michael Wiegand and Josef Ruppenhofer.

- Automatically Extracting Qualia Relations for the Rich Event Ontology – Ghazaleh Kazeminejad, Claire Bonial, Susan Windisch Brown and Martha Palmer.

- Bridging resolution: Task definition, corpus resources and rule-based experiments – Ina Roesiger, Arndt Riester and Jonas Kuhn.

- Butterfly Effects in Frame Semantic Parsing: impact of data processing on model ranking – Alexandre Kabbach, Corentin Ribeyre and Aurélie Herbelot.

- Can Taxonomy Help? Improving Semantic Question Matching using Question Taxonomy – Deepak Gupta, Rajkumar Pujari, Asif Ekbal, Pushpak Bhattacharyya, Anutosh Maitra, Tom Jain and Shubhashis Sengupta.

- Character-Level Feature Extraction with Densely Connected Networks – Chanhee Lee, Young-Bum Kim, Dongyub Lee and Heuiseok Lim.

- Clausal Modifiers in the Grammar Matrix – Kristen Howell and Olga Zamaraeva.

- Combining Information-Weighted Sequence Alignment and Sound Correspondence Models for Improved Cognate Detection – Johannes Dellert.

- Convolutional Neural Network for Universal Sentence Embeddings – Xiaoqi Jiao, Fang Wang and Dan Feng.

- Corpus-based Content Construction – Balaji Vasan Srinivasan, Pranav Maneriker, Kundan Krishna and Natwar Modani.

- Correcting Chinese Word Usage Errors for Learning Chinese as a Second Language – Yow-Ting Shiue, Hen-Hsen Huang and Hsin-Hsi Chen.

- Cross-lingual Knowledge Projection Using Machine Translation and Target-side Knowledge Base Completion – Naoki Otani, Hirokazu Kiyomaru, Daisuke Kawahara and Sadao Kurohashi.

- Cross-media User Profiling with Joint Textual and Social User Embedding – Jingjing Wang, Shoushan Li, Mingqi Jiang, Hanqian Wu and Guodong Zhou.

- Crowdsourcing a Large Corpus of Clickbait on Twitter – Martin Potthast, Tim Gollub, Kristof Komlossy, Sebastian Schuster, Matti Wiegmann, Erika Patricia Garces Fernandez, Matthias Hagen and Benno Stein.

- Deconvolution-Based Global Decoding for Neural Machine Translation – Junyang Lin, Xu SUN, Xuancheng Ren, Shuming Ma, jinsong su and Qi Su.

- Deep Neural Networks at the Service of Multilingual Parallel Sentence Extraction – Ahmad Aghaebrahimian.

- deepQuest: A Framework for Neural-based Quality Estimation – Julia Ive, Frédéric Blain and Lucia Specia.

- Diachronic word embeddings and semantic shifts: a survey – Andrey Kutuzov, Lilja Øvrelid, Terrence Szymanski and Erik Velldal.

- DIDEC: The Dutch Image Description and Eye-tracking Corpus – Emiel van Miltenburg, Ákos Kádár, Ruud Koolen and Emiel Krahmer.

- Distantly Supervised NER with Partial Annotation Learning and Reinforcement Learning – Yaosheng Yang, Wenliang Chen, Zhenghua Li, Zhengqiu He and Min Zhang.

- Document-level Multi-aspect Sentiment Classification by Jointly Modeling Users, Aspects, and Overall Ratings – Junjie Li, Haitong Yang and Chengqing Zong.

- Double Path Networks for Sequence to Sequence Learning – Kaitao Song, Xu Tan, Di He, Jianfeng Lu, Tao QIN and Tie-Yan Liu.

- Dynamic Feature Selection with Attention in Incremental Parsing – Ryosuke Kohita, Hiroshi Noji and Yuji Matsumoto.

- Embedding WordNet Knowledge for Textual Entailment – Yunshi Lan and Jing Jiang.

- Encoding Sentiment Information into Word Vectors for Sentiment Analysis – Zhe Ye, Fang Li and Timothy Baldwin.

- Enhancing General Sentiment Lexicons for Domain-Specific Use – Tim Kreutz and Walter Daelemans.

- Enriching Word Embeddings with Domain Knowledge for Readability Assessment – Zhiwei Jiang, Qing Gu, Yafeng Yin and Daoxu Chen.

- Ensure the Correctness of the Summary: Incorporate Entailment Knowledge into Abstractive Sentence Summarization – Haoran Li, Junnan Zhu, Jiajun Zhang and Chengqing Zong.

- Evaluating the text quality, human likeness and tailoring component of PASS: A Dutch data-to-text system for soccer – Chris van der Lee, Bart Verduijn, Emiel Krahmer and Sander Wubben.

- Evaluation of Unsupervised Compositional Representations – Hanan Aldarmaki and Mona Diab.

- Farewell Freebase: Migrating the SimpleQuestions Dataset to DBpedia – Michael Azmy, Peng Shi, Ihab Ilyas and Jimmy Lin.

- Fast and Accurate Reordering with ITG Transition RNN – Hao Zhang, Axel Ng and Richard Sproat.

- Few-Shot Charge Prediction with Discriminative Legal Attributes – Zikun Hu, Xiang Li, Cunchao Tu, Zhiyuan Liu and Maosong Sun.

- Fine-Grained Arabic Dialect Identification – Mohammad Salameh and Houda Bouamor.

- Generating Reasonable and Diversified Story Ending Using Sequence to Sequence Model with Adversarial Training – Zhongyang Li, Xiao Ding and Ting Liu.

- Generic refinement of expressive grammar formalisms with an application to discontinuous constituent parsing – Kilian Gebhardt.

- Genre Identification and the Compositional Effect of Genre in Literature – Joseph Worsham and Jugal Kalita.

- Gold Standard Annotations for Preposition and Verb Sense with Semantic Role Labels in Adult-Child Interactions – Lori Moon, Christos Christodoulopoulos, Fisher Cynthia, Sandra Franco and Dan Roth.

- Graph Based Decoding for Event Sequencing and Coreference Resolution – Zhengzhong Liu, Teruko Mitamura and Eduard Hovy.

- HL-EncDec: A Hybrid-Level Encoder-Decoder for Neural Response Generation – Sixing Wu, Dawei Zhang, Ying Li, Xing Xie and Zhonghai Wu.

- How Predictable is Your State? Leveraging Lexical and Contextual Information for Predicting Legislative Floor Action at the State Level – Vladimir Eidelman, Anastassia Kornilova and Daniel Argyle.

- Identifying Emergent Research Trends by Key Authors and Phrases – Shenhao Jiang, Animesh Prasad, Min-Yen Kan and Kazunari Sugiyama.

- If you’ve seen some, you’ve seen them all: Identifying variants of multiword expressions – Caroline Pasquer, Agata Savary, Carlos Ramisch and Jean-Yves Antoine.

- Improving Feature Extraction for Pathology Reports with Precise Negation Scope Detection – Olga Zamaraeva, Kristen Howell and Adam Rhine.

- Improving Named Entity Recognition by Jointly Learning to Disambiguate Morphological Tags – Onur Gungor, Suzan Uskudarli and Tunga Gungor.

- Incorporating Argument-Level Interactions for Persuasion Comments Evaluation using Co-attention Model – Lu Ji, Zhongyu Wei, Xiangkun Hu, Yang Liu, Qi Zhang and Xuanjing Huang.

- Incorporating Deep Visual Features into Multiobjective based Multi-view Search Result Clustering – Sayantan Mitra, Mohammed Hasanuzzaman and Sriparna Saha.

- Incorporating Image Matching Into Knowledge Acquisition for Event-Oriented Relation Recognition – Yu Hong, Yang Xu, Huibin Ruan, Bowei Zou, Jianmin Yao and Guodong Zhou.

- Incorporating Syntactic Uncertainty in Neural Machine Translation with a Forest-to-Sequence Model – Poorya Zaremoodi and Gholamreza Haffari.

- Incremental Natural Language Processing: Challenges, Strategies, and Evaluation – Arne Köhn.

- Indigenous language technologies in Canada: Assessment, challenges, and successes – Patrick Littell, Anna Kazantseva, Roland Kuhn, Aidan Pine, Antti Arppe, Christopher Cox and Marie-Odile Junker.

- Information Aggregation via Dynamic Routing for Sequence Encoding – Jingjing Gong, Xipeng Qiu, Shaojing Wang and Xuanjing Huang.

- Integrating Tree Structures and Graph Structures with Neural Networks to Classify Discussion Discourse Acts – Yasuhide Miura, Ryuji Kano, Motoki Taniguchi, Tomoki Taniguchi, Shotaro Misawa and Tomoko Ohkuma.

- Interaction-Aware Topic Model for Microblog Conversations through Network Embedding and User Attention – Ruifang He, Xuefei Zhang, Di Jin, Longbiao Wang, Jianwu Dang and Xiangang Li.

- Interpretation of Implicit Conditions in Database Search Dialogues – Shunya Fukunaga, Hitoshi Nishikawa, Takenobu Tokunaga, Hikaru Yokono and Tetsuro Takahashi.

- Investigating the Working of Text Classifiers – Devendra Sachan, Manzil Zaheer and Ruslan Salakhutdinov.

- iParaphrasing: Extracting Visually Grounded Paraphrases via an Image – Chenhui Chu, Mayu Otani and Yuta Nakashima.

- ISO-Standard Domain-Independent Dialogue Act Tagging for Conversational Agents – Stefano Mezza, Alessandra Cervone, Evgeny Stepanov, Giuliano Tortoreto and Giuseppe Riccardi.

- Joint Learning from Labeled and Unlabeled Data for Information Retrieval – Bo Li, Ping Cheng and Le Jia.

- Joint Neural Entity Disambiguation with Output Space Search – Hamed Shahbazi, Xiaoli Fern, Reza Ghaeini, Chao Ma, Rasha Mohammad Obeidat and Prasad Tadepalli.

- JTAV: Jointly Learning Social Media Content Representation by Fusing Textual, Acoustic, and Visual Features – Hongru Liang, Haozheng Wang, Jun Wang, Shaodi You, Zhe Sun, Jin-Mao Wei and Zhenglu Yang.

- Killing Four Birds with Two Stones: Multi-Task Learning for Non-Literal Language Detection – Erik-Lân Do Dinh, Steffen Eger and Iryna Gurevych.

- LCQMC:A Large-scale Chinese Question Matching Corpus – Xin Liu, Qingcai Chen, Chong Deng, Huajun Zeng, Jing Chen, Dongfang Li and Buzhou Tang.

- Learning Emotion-enriched Word Representations – Ameeta Agrawal, Aijun An and Manos Papagelis.

- Learning Multilingual Topics from Incomparable Corpora – Shudong Hao and Michael J. Paul.

- Learning Semantic Sentence Embeddings using Sequential Pair-wise Discriminator – Badri Narayana Patro, Vinod Kumar Kurmi, Sandeep Kumar and Vinay Namboodiri.

- Learning to Progressively Recognize New Named Entities with Sequence to Sequence Model – Lingzhen Chen and Alessandro Moschitti.

- Learning to Search in Long Documents Using Document Structure – Mor Geva and Jonathan Berant.

- Learning Visually-Grounded Semantics from Contrastive Adversarial Samples – Haoyue Shi, Jiayuan Mao, Tete Xiao, Yuning Jiang and Jian Sun.

- Learning What to Share: Leaky Multi-Task Network for Text Classification – Liqiang Xiao, Honglun Zhang, Wenqing Chen, Yongkun Wang and Yaohui Jin.

- Learning with Noise-Contrastive Estimation: Easing training by learning to scale – Matthieu Labeau and Alexandre Allauzen.

- Leveraging Meta-Embeddings for Bilingual Lexicon Extraction from Specialized Comparable Corpora – Amir Hazem and Emmanuel Morin.

- Lexi: A tool for adaptive, personalized text simplification – Joachim Bingel, Gustavo Paetzold and Anders Søgaard.

- Local String Transduction as Sequence Labeling – Joana Ribeiro, Shashi Narayan, Shay B. Cohen and Xavier Carreras.

- Location Name Extraction from Targeted Text Streams using Gazetteer-based Statistical Language Models – Hussein Al-Olimat, Krishnaprasad Thirunarayan, Valerie Shalin and Amit Sheth.

- MCDTB: A Macro-level Chinese Discourse TreeBank – Feng Jiang, Sheng Xu, Xiaomin Chu, Peifeng Li, Qiaoming Zhu and Guodong Zhou.

- MEMD: A Diversity-Promoting Learning Framework for Short-Text Conversation – Meng Zou, Xihan Li, Haokun Liu and Zhihong Deng.

- Modeling Multi-turn Conversation with Deep Utterance Aggregation – Zhuosheng Zhang, Jiangtong Li, Pengfei Zhu and Hai Zhao.

- Modeling the Readability of German Targeting Adults and Children: An empirically broad analysis and its cross-corpus validation – Zarah Weiß and Detmar Meurers.

- Multi-layer Representation Fusion for Neural Machine Translation – Qiang Wang, Fuxue Li, Tong Xiao, Yanyang Li, Yinqiao Li and Jingbo Zhu.

- Multi-Perspective Context Aggregation for Semi-supervised Cloze-style Reading Comprehension – Liang Wang, Sujian Li, Wei Zhao, Kewei Shen, Meng Sun, Ruoyu Jia and Jingming Liu.

- Multi-Source Multi-Class Fake News Detection – Hamid Karimi, Proteek Roy, Sari Saba-Sadiya and Jiliang Tang.

- Multi-task and Multi-lingual Joint Learning of Neural Lexical Utterance Classification based on Partially-shared Modeling – Ryo Masumura, Tomohiro Tanaka, Ryuichiro Higashinaka, Hirokazu Masataki and Yushi Aono.

- Multi-task dialog act and sentiment recognition on Mastodon – Christophe Cerisara, Somayeh Jafaritazehjani, Adedayo Oluokun and Hoa T. Le.

- Multi-Task Learning for Sequence Tagging: An Empirical Study – Soravit Changpinyo, Hexiang Hu and Fei Sha.

- Multi-Task Neural Models for Translating Between Styles Within and Across Languages – Xing Niu, Sudha Rao and Marine Carpuat.

- Narrative Schema Stability in News Text – Dan Simonson and Anthony Davis.

- Natural Language Interface for Databases Using a Dual-Encoder Model – Ionel Alexandru Hosu, Radu Cristian Alexandru Iacob, Florin Brad, Stefan Ruseti and Traian Rebedea.

- Neural Machine Translation Incorporating Named Entity – Arata Ugawa, Akihiro Tamura, Takashi Ninomiya, Hiroya Takamura and Manabu Okumura.

- Neural Math Word Problem Solver with Reinforcement Learning – Danqing Huang, Jing Liu, Chin-Yew Lin and Jian Yin.

- NIPS Conversational Intelligence Challenge 2017 Winner System: Skill-based Conversational Agent with Supervised Dialog Manager – Idris Yusupov and Yurii Kuratov.

- One vs. Many QA Matching with both Word-level and Sentence-level Attention Network – Lu Wang, Shoushan Li, Changlong Sun, Luo Si, Xiaozhong Liu, Min Zhang and Guodong Zhou.

- Open-Domain Event Detection using Distant Supervision – Jun Araki and Teruko Mitamura.

- Par4Sim — Adaptive Paraphrasing for Text Simplification – Seid Muhie Yimam and Chris Biemann.

- Parallel Corpora for bi-lingual English-Ethiopian Languages Statistical Machine Translation – Michael Melese, Solomon Teferra Abate, Martha Yifiru Tachbelie, Million Meshesha, Wondwossen Mulugeta, Yaregal Assibie, Solomon Atinafu, Binyam Ephrem, Tewodros Abebe, Hafte Abera, Amanuel Lemma, Tsegaye Andargie, Seifedin Shifaw and Wondimagegnhue Tsegaye.

- Part-of-Speech Tagging on an Endangered Language: a Parallel Griko-Italian Resource – Antonios Anastasopoulos, Marika Lekakou, Josep Quer, Eleni Zimianiti, Justin DeBenedetto and David Chiang.

- Personalizing Lexical Simplification – John Lee and Chak Yan Yeung.

- Pluralizing Nouns across Agglutinating Bantu Languages – Joan Byamugisha, C. Maria Keet and Brian DeRenzi.

- Point Precisely: Towards Ensuring the Precision of Data in Generated Texts Using Delayed Copy Mechanism – Liunian Li and Xiaojun Wan.

- Projecting Embeddings for Domain Adaption: Joint Modeling of Sentiment Analysis in Diverse Domains – Jeremy Barnes, Roman Klinger and Sabine Schulte im Walde.

- Reading Comprehension with Graph-based Temporal-Casual Reasoning – Yawei Sun, Gong Cheng and Yuzhong Qu.

- Real-time Change Point Detection using On-line Topic Models – Yunli Wang and Cyril Goutte.

- Refining Source Representations with Relation Networks for Neural Machine Translation – Wen Zhang, hu jiawei, Yang Feng and Qun Liu.

- Representation Learning of Entities and Documents from Knowledge Base Descriptions – Ikuya Yamada, Hiroyuki Shindo and Yoshiyasu Takefuji.

- Reproducing and Regularizing the SCRN Model – Olzhas Kabdolov, Zhenisbek Assylbekov and Rustem Takhanov.

- Responding E-commerce Product Questions via Exploiting QA Collections and Reviews – Qian Yu, Wai Lam and Zihao Wang.

- ReSyf: a French lexicon with ranked synonyms – Mokhtar Boumedyen BILLAMI, Thomas François and Nuria Gala.

- Retrofitting Distributional Embeddings to Knowledge Graphs with Functional Relations – Ben Lengerich, Andrew Maas and Christopher Potts.

- Revisiting the Hierarchical Multiscale LSTM – Ákos Kádár, Marc-Alexandre Côté, Grzegorz Chrupała and Afra Alishahi.

- Rich Character-Level Information for Korean Morphological Analysis and Part-of-Speech Tagging – Andrew Matteson, Chanhee Lee, Youngbum Kim and Heuiseok Lim.

- Robust Lexical Features for Improved Neural Network Named-Entity Recognition – Abbas Ghaddar and Phillippe Langlais.

- RuSentiment: An Enriched Sentiment Analysis Dataset for Social Media in Russian – Anna Rogers, Alexey Romanov, Anna Rumshisky, Svitlana Volkova, Mikhail Gronas and Alex Gribov.

- Scoring and Classifying Implicit Positive Interpretations: A Challenge of Class Imbalance – Chantal van Son, Roser Morante, Lora Aroyo and Piek Vossen.

- Semantic Parsing for Technical Support Questions – Abhirut Gupta, Anupama Ray, Gargi Dasgupta, Gautam Singh, Pooja Aggarwal and Prateeti Mohapatra.

- Sensitivity to Input Order: Evaluation of an Incremental and Memory-Limited Bayesian Cross-Situational Word Learning Model – Sepideh Sadeghi and Matthias Scheutz.

- Sentence Weighting for Neural Machine Translation Domain Adaptation – Shiqi Zhang and Deyi Xiong.

- Seq2seq Dependency Parsing – Zuchao Li, Jiaxun Cai, Shexia He and Hai Zhao.

- Sequence-to-Sequence Learning for Task-oriented Dialogue with Dialogue State Representation – Haoyang Wen, Yijia Liu, Wanxiang Che, Libo Qin and Ting Liu.

- SeVeN: Augmenting Word Embeddings with Unsupervised Relation Vectors – Luis Espinosa Anke and Steven Schockaert.

- Simple Neologism Based Domain Independent Models to Predict Year of Authorship – Vivek Kulkarni, Yingtao Tian, Parth Dandiwala and Steve Skiena.

- Sliced Recurrent Neural Networks – Zeping Yu and Gongshen Liu.

- SMHD: a Large-Scale Resource for Exploring Online Language Usage for Multiple Mental Health Conditions – Arman Cohan, Bart Desmet, Andrew Yates, Luca Soldaini, Sean MacAvaney and Nazli Goharian.

- Source Critical Reinforcement Learning for Transferring Spoken Language Understanding to a New Language – He Bai, Yu Zhou, Jiajun Zhang, Liang Zhao, Mei-Yuh Hwang and Chengqing Zong.

- Stance Detection with Hierarchical Attention Network – Qingying Sun, Zhongqing Wang, Qiaoming Zhu and Guodong Zhou.

- Structured Representation Learning for Online Debate Stance Prediction – Chang Li, Aldo Porco and Dan Goldwasser.

- Style Detection for Free Verse Poetry from Text and Speech – Timo Baumann, Hussein Hussein and Burkhard Meyer-Sickendiek.

- Style Obfuscation by Invariance – Chris Emmery, Enrique Manjavacas Arevalo and Grzegorz Chrupała.

- Summarization Evaluation in the Absence of Human Model Summaries Using the Compositionality of Word Embeddings – Elaheh ShafieiBavani, Mohammad Ebrahimi, Raymond Wong and Fang Chen.

- Synonymy in Bilingual Context: The CzEngClass Lexicon – Zdenka Uresova, Eva Fucikova, Eva Hajicova and Jan Hajic.

- Tailoring Neural Architectures for Translating from Morphologically Rich Languages – Peyman Passban, Andy Way and Qun Liu.

- Task-oriented Word Embedding for Text Classification – Qian Liu, Heyan Huang, Yang Gao, Xiaochi Wei, Yuxin Tian and Luyang Liu.

- The APVA-TURBO Approach To Question Answering in Knowledge Base – Yue Wang, Richong Zhang, Cheng Xu and Yongyi Mao.

- Toward Better Loanword Identification in Uyghur Using Cross-lingual Word Embeddings – Chenggang Mi, Yating Yang, Lei Wang, Xi Zhou and Tonghai Jiang.

- Towards a Language for Natural Language Treebank Transductions – Carlos A. Prolo.

- Towards an argumentative content search engine using weak supervision – Ran Levy, Ben Bogin, Shai Gretz, Ranit Aharonov and Noam Slonim.

- Transfer Learning for a Letter-Ngrams to Word Decoder in the Context of Historical Handwriting Recognition with Scarce Resources – Adeline Granet, Emmanuel Morin, Harold Mouchère, Solen Quiniou and Christian Viard-Gaudin.

- Transfer Learning for Entity Recognition of Novel Classes – Juan Diego Rodriguez, Adam Caldwell and Alexander Liu.

- Twitter corpus of Resource-Scarce Languages for Sentiment Analysis and Multilingual Emoji Prediction – Nurendra Choudhary, Rajat Singh, Vijjini Anvesh Rao and Manish Shrivastava.

- Urdu Word Segmentation using Conditional Random Fields (CRFs) – Haris Bin Zia, Agha Ali Raza and Awais Athar.

- User-Level Race and Ethnicity Predictors from Twitter Text – Daniel Preoţiuc-Pietro and Lyle Ungar.

- Using Formulaic Expressions in Writing Assistance Systems – Kenichi Iwatsuki and Akiko Aizawa.

- Using Word Embeddings for Unsupervised Acronym Disambiguation – Jean Charbonnier and Christian Wartena.

- Visual Question Answering Dataset for Bilingual Image Understanding: A Study of Cross-Lingual Transfer Using Attention Maps – Nobuyuki Shimizu, Na Rong and Takashi Miyazaki.

- Vocabulary Tailored Summary Generation – Kundan Krishna, Aniket Murhekar, Saumitra Sharma and Balaji Vasan Srinivasan.

- What’s in Your Embedding, And How It Predicts Task Performance – Anna Rogers, Shashwath Hosur Ananthakrishna and Anna Rumshisky.

- Who Feels What and Why? Annotation of a Literature Corpus with Semantic Roles of Emotions – Evgeny Kim and Roman Klinger.

- Why does PairDiff work? – A Mathematical Analysis of Bilinear Relational Compositional Operators for Analogy Detection – Huda Hakami, Kohei Hayashi and Danushka Bollegala.

- WikiRef: Wikilinks as a route to recommending appropriate references for scientific Wikipedia pages – Abhik Jana, Pranjal Kanojiya, Pawan Goyal and Animesh Mukherjee.

- Word Sense Disambiguation Based on Word Similarity Calculation Using Word Vector Representation from a Knowledge-based Graph – Dongsuk O, Sunjae Kwon, Kyungsun Kim and Youngjoong Ko.

COLING 2018 Best papers

There are multiple categories of award at COLING 2018, as we laid out in an earlier blog post. We received 44 nominations for best papers over ten categories, and conferred best paper awards in the categories as follows:

- Best error analysis: SGM: Sequence Generation Model for Multi-label Classification, by Pengcheng Yang, Xu Sun, Wei Li, Shuming Ma, Wei Wu and Houfeng Wang.

- Best evaluation: SGM: Sequence Generation Model for Multi-label Classification, by Pengcheng Yang, Xu Sun, Wei Li, Shuming Ma, Wei Wu and Houfeng Wang.

- Best linguistic analysis: Distinguishing affixoid formations from compounds, by Josef Ruppenhofer, Michael Wiegand, Rebecca Wilm and Katja Markert

- Best NLP engineering experiment: Authorless Topic Models: Biasing Models Away from Known Structure, by Laure Thompson and David Mimno

- Best position paper: Arguments and Adjuncts in Universal Dependencies, by Adam Przepiórkowski and Agnieszka Patejuk

- Best reproduction paper: Neural Network Models for Paraphrase Identification, Semantic Textual Similarity, Natural Language Inference, and Question Answering, by Wuwei Lan and Wei Xu

- Best resource paper: AnlamVer: Semantic Model Evaluation Dataset for Turkish – Word Similarity and Relatedness, by Gökhan Ercan and Olcay Taner Yıldız

- Best survey paper: A Survey on Open Information Extraction, by Christina Niklaus, Matthias Cetto, André Freitas and Siegfried Handschuh

- Most reproducible: Design Challenges and Misconceptions in Neural Sequence Labeling, by Jie Yang, Shuailong Liang and Yue Zhang

Note that, as announced last year, for open science & reproducibility COLING 2018 did not confer best paper awards to paper that could not make the code/resources publicly available by camera ready time. This means you can ask the best paper authors for associated data and programs right now, and they should be able to provide you with a link.

In addition, we would like to note the following papers as “Area Chair Favorites”, which were nominated by reviewers and recognised as excellent by chairs.

- Visual Question Answering Dataset for Bilingual Image Understanding: A study of cross-lingual transfer using attention maps. Nobuyuki Shimizu, Na Rong and Takashi Miyazaki

- Using J-K-fold Cross Validation To Reduce Variance When Tuning NLP Models. Henry Moss, David Leslie and Paul Rayson

- Measuring the Diversity of Automatic Image Descriptions. Emiel van Miltenburg, Desmond Elliott and Piek Vossen

- Reading Comprehension with Graph-based Temporal-Causal Reasoning. Yawei Sun, Gong Cheng and Yuzhong Qu

- Diachronic word embeddings and semantic shifts: a survey. Andrey Kutuzov, Lilja Øvrelid, Terrence Szymanski and Erik Velldal

- Transfer Learning for Entity Recognition of Novel Classes. Juan Diego Rodriguez, Adam Caldwell and Alexander Liu

- Joint Modeling of Structure Identification and Nuclearity Recognition in Macro Chinese Discourse Treebank. Xiaomin Chu, Feng Jiang, Yi Zhou, Guodong Zhou and Qiaoming Zhu

- Unsupervised Morphology Learning with Statistical Paradigms. Hongzhi Xu, Mitchell Marcus, Charles Yang and Lyle Ungar

- Challenges of language technologies for the Americas indigenous languages. Manuel Mager, Ximena Gutierrez-Vasques, Gerardo Sierra and Ivan Meza-Ruiz

- A Lexicon-Based Supervised Attention Model for Neural Sentiment Analysis. Yicheng Zou, Tao Gui, Qi Zhang and Xuanjing Huang

- From Text to Lexicon: Bridging the Gap between Word Embeddings and Lexical Resources. Ilia Kuznetsov and Iryna Gurevych

- The Road to Success: Assessing the Fate of Linguistic Innovations in Online Communities. Marco Del Tredici and Raquel Fernández

- Relation Induction in Word Embeddings Revisited. Zied Bouraoui, Shoaib Jameel and Steven Schockaert

- Learning with Noise-Contrastive Estimation: Easing training by learning to scale. Matthieu Labeau and Alexandre Allauzen

- Stress Test Evaluation for Natural Language Inference. Aakanksha Naik, Abhilasha Ravichander, Norman Sadeh, Carolyn Rose and Graham Neubig

- Recurrent One-Hop Predictions for Reasoning over Knowledge Graphs. Wenpeng Yin, Yadollah Yaghoobzadeh and Hinrich Schütze

- SMHD: a Large-Scale Resource for Exploring Online Language Usage for Multiple Mental Health Conditions. Arman Cohan, Bart Desmet, Andrew Yates, Luca Soldaini, Sean MacAvaney and Nazli Goharian

- Automatically Extracting Qualia Relations for the Rich Event Ontology. Ghazaleh Kazeminejad, Claire Bonial, Susan Windisch Brown and Martha Palmer

- What represents “style” in authorship attribution?. Kalaivani Sundararajan and Damon Woodard

- SeVeN: Augmenting Word Embeddings with Unsupervised Relation Vectors. Luis Espinosa Anke and Steven Schockaert

- GenSense: A Generalized Sense Retrofitting Model. Yang-Yin Lee, Ting-Yu Yen, Hen-Hsen Huang, Yow-Ting Shiue and Hsin-Hsi Chen

- A Multi-Attention based Neural Network with External Knowledge for Story Ending Predicting Task. Qian Li, Ziwei Li, Jin-Mao Wei, Yanhui Gu, Adam Jatowt and Zhenglu Yang

- Abstract Meaning Representation for Multi-Document Summarization. Kexin Liao, Logan Lebanoff and Fei Liu

- Cooperative Denoising for Distantly Supervised Relation Extraction. Kai Lei, Daoyuan Chen, Yaliang Li, Nan Du, Min Yang, Wei Fan and Ying Shen

- Dialogue Act Driven Conversation Model: An Experimental Study. Harshit Kumar, Arvind Agarwal and Sachindra Joshi

- Dynamic Multi-Level, Multi-Task Learning for Sentence Simplification. Han Guo, Ramakanth Pasunuru and Mohit Bansal

- A Knowledge-Augmented Neural Network Model for Implicit Discourse Relation Classification. Yudai Kishimoto, Yugo Murawaki and Sadao Kurohashi

- Abstractive Multi-Document Summarization using Paraphrastic Sentence Fusion. Mir Tafseer Nayeem, Tanvir Ahmed Fuad and Yllias Chali

- They Exist! Introducing Plural Mentions to Coreference Resolution and Entity Linking. Ethan Zhou and Jinho D. Choi

- A Comparison of Transformer and Recurrent Neural Networks on Multilingual NMT. Surafel Melaku Lakew, Mauro Cettolo and Marcello Federico

- Expressively vulgar: The socio-dynamics of vulgarity and its effects on sentiment analysis in social media. Isabel Cachola, Eric Holgate, Daniel Preoţiuc-Pietro and Junyi Jessy Li

- On Adversarial Examples for Character-Level Neural Machine Translation. Javid Ebrahimi, Daniel Lowd and Dejing Dou

- Neural Transition-based String Transduction for Limited-Resource Setting in Morphology. Peter Makarov and Simon Clematide

- Structured Dialogue Policy with Graph Neural Networks. Lu Chen, Bowen Tan, Sishan Long and Kai Yu

We would like to recognise with exceptional thanks our best paper committee.

Review statistics

So far, there have been many things to measure of our review process at COLING. Here are a few.

Firstly, it’s interesting to see how many reviewers recommend the authors cite them. We can’t evaluate how appropriate this is, but it happened in 68 out of 2806 reviews (2.4%).

Best paper nominations are quite rare in general. This gives very little signal for the best paper committee to work with. To gain more information, in addition to asking whether a paper warranted further recognition, we asked reviewers to say if a given paper was the best out of those they had reviewed. This worked well for 747 reviewers, but 274 reviewers (26.8%) said no paper they reviewed was the best of their reviewing allocation.

Mean scores and confidence can be broken down by type, as follows.

| Score | Confidence | |

| Computationally-aided linguistic analysis | 2.85 | 3.42 |

| NLP engineering experiment paper | 2.86 | 3.51 |

| Position paper | 2.41 | 3.36 |

| Reproduction paper | 2.92 | 3.54 |

| Resource paper | 2.76 | 3.50 |

| Survey paper | 2.93 | 3.58 |

We can see that reviewers were least confident with position papers, and were both most confident and most pleased with survey papers—though reproduction papers came in a close second in regard to mean score. This fits the general expectation that position papers are hard to evaluate.

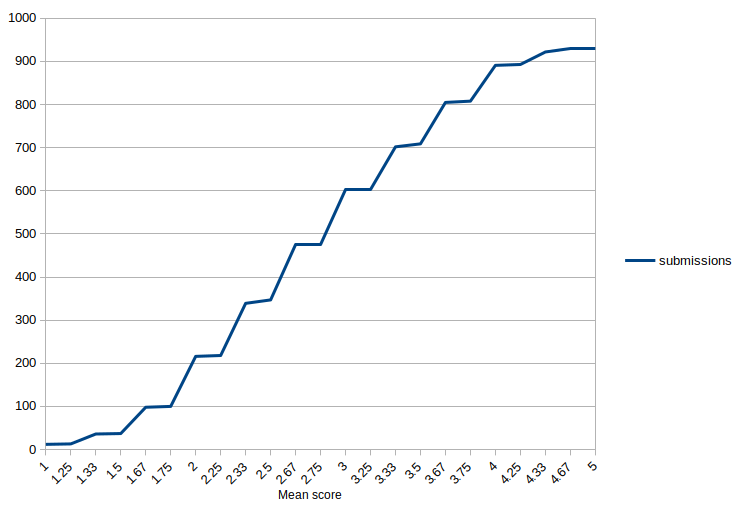

The overall distribution of scores follows.

Anonymity and Review

Anonymous review is a way of achieving a fairer process. The ongoing discussion among many in our field led to us examining how well this was really working, and rethinking how anonymity was implemented for COLING this year.

One step we took was to make sure that area chairs did not know who the authors were. This is important because area chairs are the ones putting forward recommendations based on reviews; area chairs are the people who mediate between borderline papers and acceptance, or who assess reviewer ratings to decide if they put a paper on the wrong side of the acceptance boundary. This is a critical and powerful role. So, we should be extra sure that if a venue has chosen to run an anonymized process, the area chairs don’t see paper authors’ names.

This policy caused a little initial surprise but everyone has adapted quickly. In order for this to work, authors must continue to hide their identity, especially through author response to chairs—the current process.

We also increased anonymity in reviewer discussion: reviewers did not and still do not know each others’ identity. To keep review tone professional, we will reveal reviewer identities to each other later in the process, so if you are one of our generous program committee members, you can see who perhaps wrote the excellent review you saw, and also who left the blank one—on submissions you also reviewed.

It’s established that signed reviews—that is, those including the reviewer’s name—are generally found by authors to be of better quality and tone. We gave an option to reviewers to sign their reviews. This time, 121 reviewers used this, out of 1020 active review authors (11.9%).

On the topic of anonymity, there have been a few rejections due to poor or absent anonymization. To help future authors, here are some ways anonymity can be broken.

- Linking to a personal or institutional github account and making it clear in the prose it is the authors’ (e.g. “We make this available at github.com/authorname/tool/”).

- Describing and citing prior work as “we showed”, “our previous work”, and so on

- Leaving names and affiliations on the front page

- Including unpublished papers in the bibliography

Some of these can be avoided by simply only referring to one’s past literature in the camera-ready copy, and holding back for review, which is a strategy we recommend. Of course it’s not always possible, but in most of cases we saw, refraining from self-citing would not have damaged the narrative and would have left the paper compliant.

The final step in the review process, from the author side, is author response to chairs. Please remember to keep yourself anonymous here—the chairs know neither author nor reviewer identities, which helps them be impartial.

COLING 2018 Submissions Overview

We’ve had a successful COLING so far, with over a thousand papers submitted, covering a variety of areas. In total, 1017 papers were submitted to the main conference, all full-length.

Each submitted paper had a distinct type assigned by the authors, that affects how it is reviewed. These were developed based on our earlier blog post on paper types. The “NLP Engineering Experiment paper” was unsurprisingly the dominant type, though only made up for 65% of all papers. We were very happy to receive 25 survey papers, 31 position papers, and 35 reproduction papers—as well as a solid 106 resource papers and a strong showing of 163 computationally-aided linguistic analysis papers, the second largest contingent.

Some papers were withdrawn or desk rejected before review began in earnest. Between ACs and PC co-chairs, in total, 32 papers were rejected without review. Excluding desk rejects, so far 41 papers have been withdrawn from consideration by the authors.

Allocating papers to areas gave each area a mean and median of 27 papers. The largest area has 31 papers and the smallest 19. We interpret this as indicating that area chairs will not be overloaded, leading to better review quality and interpretation.

Who gets to author a paper? A note on the Vancouver recommendations

At COLING 2018, we require submitted work to follow the Vancouver Convention on authorship – i.e. who gets to be an author on a paper. This guest post by Željko Agić of ITU Copenhagen introduces the topic.

Who gets to author a paper? A note on the Vancouver recommendations

One of the basic principles of publishing scientific research is that research papers are authored and signed by researchers.

Recently, the tenet of authorship has sparked some very interesting discussions in our community. In light of the increased use of preprint servers, we have been questioning the *ACL conference publication workflows. These mostly had to do with the peer review biases, but also with authorship: Should we enable blind preprint publications?

The notion of unattributed publications mostly does not sit well with researchers. We do not even know how to cite such papers, while we can invoke entire research programs in our paper narratives through a single last name.

Authorship is of crucial importance in research, and not just in writing up our related work sections. This goes without saying to all us fellow researchers. While in everyday language an author is simply a writer or an instigator of a piece of work, the question is slightly more nuanced in publishing scientific work:

- What activities qualify one for paper authorship?

- If there are multiple contributors, how should they be ordered?

- Who decides on the list of paper authors?

These questions have sparked many controversies over the centuries of scientific research. An F. D. C. Willard, short for Felis Domesticus Chester, has authored a physics paper, similar to Galadriel Mirkwood, a Tolkien-loving Afgan hound versed in medical research. Others have built on the shoulders of giants such as Mickey Mouse and his prolific group.

Yet, authorship is no laughing matter: It can make and break research careers, and its (un)fair treatment can make a difference between a wonderful research group and an uneasy one at the least. A fair and transparent approach to authorship is of particular importance to early-stage researchers. There, the tall tales of PhD students might include the following conjectures:

- The PIs in medical research just sign all the papers their students author.

- In algorithms research the author ordering is always alphabetical.

- Conference papers do not make explicit the individual author contributions.

- The first and the last author matter the most.

The curiosities and the conjectures listed above all stem from the fact that there seems to be no awareness of any standard rulebook to play by in publishing research. This in turn gives rise to the many different traditions in different fields.

Yet, there is a rulebook!

One prominent attempt to put forth a set of guidelines for determining authorship are the Vancouver Group recommendations. The Vancouver Group are the International Committee of Medical Journal Editors (ICMJE), who in 1985 introduced a set of criteria for authorship. The criteria have seen many updates over the years, to match the latest developments in research and publishing. Their scope far surpasses the topic of authorship, and spans across the scientific publication process: reviewing, editorial work, publishing, copyright, and the like.

While the recommendations do stem from the medical field, they are nowadays broadened and thus widely adopted. The following is an excerpt from the recommendations in relation to authorship criteria.

The ICMJE recommends that authorship be based on the following 4 criteria:

1. Substantial contributions to the conception or design of the work; or the acquisition, analysis, or interpretation of data for the work; AND

2. Drafting the work or revising it critically for important intellectual content; AND

3. Final approval of the version to be published; AND

4. Agreement to be accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved.

(…)

All those designated as authors should meet all four criteria for authorship, and all who meet the four criteria should be identified as authors. Those who do not meet all four criteria should be acknowledged.

(…)

These authorship criteria are intended to reserve the status of authorship for those who deserve credit and can take responsibility for the work. The criteria are not intended for use as a means to disqualify colleagues from authorship who otherwise meet authorship criteria by denying them the opportunity to meet criterion #s 2 or 3.